Tuesday, February 15, 2011

Monday, February 14, 2011

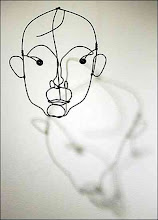

Who is...?

In the struggle to create an artificial intelligence that is truly “intelligent”, rather than a mere imitation of intelligence, the greatest obstacle may be ambiguity. AI software tends to have trouble with teasing out the meanings of puns, for example; it’s one of the ways of getting a machine to fail the Turing Test. Computers have gotten pretty good at playing chess (IBM’s Big Blue defeated Grand Master Garry Kasparov in a well-publicized event), but until now haven’t graduated far beyond that level of complexity.

Well, IBM is at it again, and their new system, Watson, is going on Jeopardy up against the game show’s two biggest winners. It’s a very big deal, because Jeopardy’s clues frequently are built around puns and other kinds of word play—ambiguities that often throw machines off their game.

This story from NPR summarizes it well, but there’s an interesting and commonly held assumption expressed in this story that I think warrants re-examination. The piece reports that systems like Watson have trouble with ambiguity because, though they can understand relations among words (that is, they can identify syntactic patterns and figure out how a sentence is put together, using that information to hone in on what kind of question is being asked), they can’t understand the meanings of words because they don’t have experience to relate to those words.

Embedded in this claim is the assumption that humans do have experience (of course!) and that our experience comes from direct, unmediated access to the world around us. When we read the word “island” we have a deep understanding of what an island is, because we’ve seen islands. Computers can’t do this, because they haven’t experience islands or even water or land, the argument goes. This is a version of the Chinese Room argument—the argument that semantics cannot be derived from syntax.

This argument just doesn’t hold up in my way of thinking. First of all, some humans (desert dwellers, for example) may have never seen islands, but that would not inhibit their ability to understand the concept of island-ness. Helen Keller became able to understand such concepts though she had neither seen nor heard anything from the physical world.

Second, our perception of the external world is not unmediated. Our perceptions are generated by a hardware system that converts light into electronic signals, which are then processed and stored in the brain, not unlike the way the digital camera converts light signals to images on your laptop. Research shows that there is a clear delay between physical processing and awareness of perceptions. And the complexity of human perception is starting to seem less and less unique; computers are becoming better and better at being able to process physical information like images and sounds—face recognition and voice recognition software, for example; or the apps that can identify your location according to your photo or can identify a song according to a snippet that you record. The main difference is that in humans, there is an intelligence behind the process; in the computer there is not.

Which leads us ultimately to the question that Watson’s creators are trying to answer. What is intelligence?

The answer must be in the way the information is processed. Intelligence is likely ultimately to come from the complexity of association (including self-relexiveness, a kind of association) that is embedded in the system. The human brain is in a constant state of association and context building. My brain has been working on context and association building for 45 years (and that is a kind of experience). Why shouldn’t computers be capable of this kind of processing—that is, of learning? (Computers are, of course, capable of learning—in adaptive computer games, for example—just not yet at the level of complexity of humans.)What we don’t completely understand about human cognition are the processes used to find information—is it a vertical searching system, a horizontal searching system, some hybrid of vertical and horizontal with some trial and error thrown in? Something else? We just don’t know yet.

But cracking the code for pun recognition and “understanding” word play and jokes may get us a big step closer to an answer. Watson may lose, and he may win (he probably will win, as he did in trail runs for the show). If Watson loses, I hope he won’t feel badly about it; many humans have trouble with ambiguity, too.

Subscribe to:

Posts (Atom)